My Morning Entropy

I revisited the idea of complextropy last night and I had this sense that it ties into the power of abstractions – that it’s going to tell me something fundamental about the status of living things. I’m not sure. I couldn’t figure it out last night, but I was tired then. Here’s my attempt in the morning.

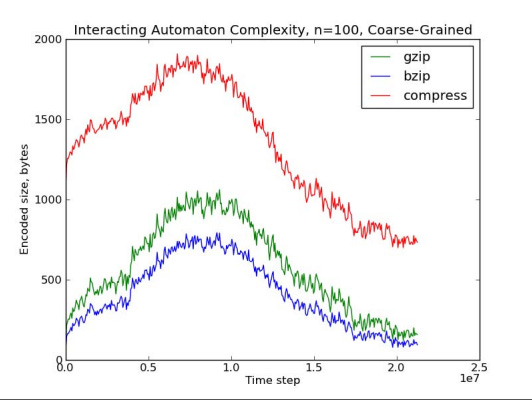

Complextropy is a name for this interesting phenomena. The following is an image from Scott Aaronson’s blog post where he coins the idea.

Complextropy is this idea that as entropy monotonically increases, as the milk goes from sitting on top of the coffee to dispersing randomly throughout it, this notion of complexity that humans have is not quite as linear. We find the middle section the most “interesting.” Our complexity notions seem to have this inverted U shape, which peaks in the middle, in this space when the milk has swirled around just a bit, not quite uniform, not quite separated.

A better middle picture (in my humble opinion):

This picture is poetic to me, this swirling mass. There’s something really interesting about it. Something really complex. Aaronson would say it has high complextropy. But from entropy’s point of view, it’s not as complex as it could get if we let it all settle into complete uniformity. When the milk and coffee become one light brown homogenous liquid, when the milk is randomly distributed, that’s when entropy is highest.

Another example – the string 000000 is pretty boring, the string 9837451 is pretty meaningless, but the string 13579 is interesting. The Kolmogorov complexity of these strings exhibits roughly the same monotonic behavior, though, with no special status to the odd numbers. In other words, it’s highest at 9837451 and lowest at 000000.

Sad Simulacrums

So what is it, exactly, that peaks in the middle? One of Aaronson’s grad students had a cool idea, the one that feels connected to life somehow. She said that maybe you get the inverted U by first abstracting over the physical states (i.e., by first coarse graining – by taking the average of each 3x3 region of space). If you then take the compressed file sizes of those coarse grainings, this shape will emerge. And it does:

Where you can see that in the middle, when the coffee is pure purl, the compressed file size of the coarse graining is highest and it’s lower at all other points. An inverted U.

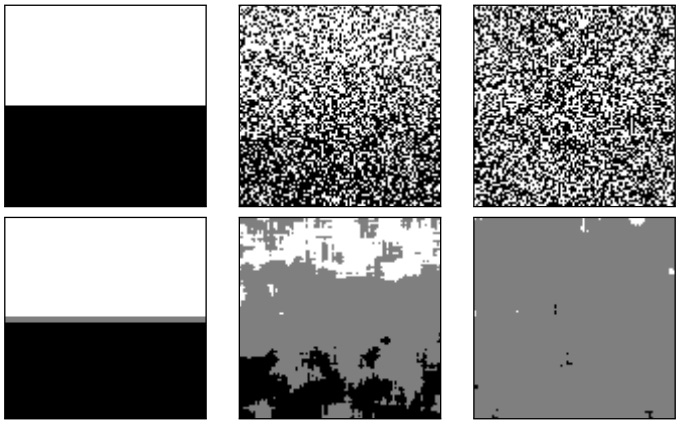

You can see the non coarse grained states above and the coarse grained states below in these sad coffee simulacrums (taken from their paper):

And you can see how this inverted U would emerge. Take the first case, with the milk just sitting on top of the coffee. When you coarse grain it, i.e., when you take the average of each 3x3 section of the simulated coffee cup, you basically get the same thing back, white sitting on black. In the final case, with a completely uniform distribution, the average of each 3x3 will roughly be the same, just one continuous grey mass. But in the middle – in the middle these coarse grainings are harder to compress, the averages mean something different one 3x3 section to the next.

This coarse graining is how they tried to quantify the idea of Sophistication. I don’t fully understand it, but I take it to mean something like: Soph(a string, x) = the shortest program which would output a set of strings such that x is not a special member (special has a concrete sense in algorithmic information theory, basically it shouldn’t be any easier to “locate” in the set than any other string).

As you can see, the Sophistication of 00000 would be small since the set it belongs to (of which it is not special) is something like “print x n times in a row.” And the Sophistication of 9837451 is small since it belongs to the set generated by “print x random numbers.” But the odd numbers are a bit more complicated, something like “print all natural number sequences.”

The interesting thing here is that Sophistication is abstracted. Now instead of just looking at a program that generates a single string, like in Kolmogorov complexity, you’re looking at a program that generates a set of strings, in other words, a microstates (strings) to macrostate (program) mapping.

Recursive Rabbit Holes

But okay, back to the coffee. That middle one in the bottom panel -- there’s something interesting about it. This high complextropy. Captured in abstractions.

First of all, what is the real power of abstractions that I keep talking about? I wrote a post on this earlier, titled “on the power of abstractions,” where I go into more detail. It was mostly poetic, though. I don’t have a formal definition yet. But it’s something like this: the abstractions that humans use (e.g., apple, ball, person, fairness, etc), “do more” than other abstractions they could have had. Where “do more” is a little out of my league as a non-physicist, but I expect it’s something like: upon interacting with a privileged abstraction, like the human concept of “ball,” it has more ripples throughout the physical universe than a non-privileged abstraction would.

In other words, by having the abstraction “ball,” we get access to this high level thing – this cluster of atoms which, when clustered in the right way, begets leverage – simple modes of use, like kicking the ball which causes all of those atoms to move at once. In other words, you don’t need to personally move every single rubber bit to move the ball. You need only impinge on a small subsection of them to leverage the entire motion, to get all of the ball atoms to do things. Like there’s more out of it than you put in. The thing I keep picturing is this riding amplitude throughout the wave equation, big ripples.

Which now that I write it seems like it can’t be true, since energy is conserved. You can’t get out more than you can get in. It also seems like it can’t be true since when you undraw all of these lines, around the person and the ball, it doesn’t really look like there’s any leverage at all, just a bunch of atoms bumping into each other in lockstep.

This is a massive problem that I have difficulty looking past, this inability to find a good (objective?) bridge between the physical and design levels (a la Dennett). But my conviction that abstractions are real in this way is so strong that I think I am going to figure out this conundrum at some point. Or at least, I’m resting on that being true. And I sort of want to spend some of my time assuming that I’ve solved it because otherwise I will be stuck in the bottom of the rabbit hole inside of a rabbit hole for a very long time.

The other motivation for this stance is that living organisms seem to make use of leveraged abstractions quite a bit. When an embryo is forming, a single voltage difference in a single cell can cause an entire eye to grow on a leg, weeks downstream of the intervention. It’s like living things have somehow harnessed this language of leverage – these little buttons they can press which cascade out into all of this complexity. This landscape of triggers, simple modes of interaction, which allow them to grow up out of the Laplacian universe and into the land of the living.

Some Sweetener, Please

So this is what the power of abstraction is in living things, this ability to leverage the environment to their benefit. Abstractions are also these many to one things, these microstate to macrostate mappings. This is exactly what coarse graining is. Exactly what Sophistication is talking about. And I have the sense that complextropy is pointing towards some deep truth about the universe. So what is it? What is the sense in which these two things might be connected? This layer of abstractions that living things are built out of, these abstractions with real power, and this sense in which abstractions give rise to this inverted U?

The first thing I feel drawn to saying is something like: abstractions set up this sweet spot – if you look at the world in terms of the fundamental bits, whatever that means, in the atoms or the fields or what have you, at the physical level (a la Dennett), then you don’t get anything that is especially useful to humans. But if you layer over this space with abstractions, then you find this sweet spot, this place in the middle where abstractions give you affordances.

They aren’t all that useful in the other two coffee scenarios: in the first, the coarse graining results in basically the same, boring thing. In the last state, you just get a grey, vacant plain. But in the middle, they take that swirling mass and they give you something to work with, at least to our eyes.

Is this the same sense in which abstractions have power in living things? In which they take advantage of these small triggers that cascade out into all of this complexity?

Anthropic Abstractions

There’s this neat idea that Stephen Wolfram has – this thing called computational irreducibility. It’s the notion that for some phenomena, the only way to predict the outcome is to run it forward. There are no shortcuts, no compressions you can make which would enable you to “jump ahead,” you just have to wait and see. He thinks that most things in the universe are like this. And I’m not sure if it’s true, but it certainly feels that way. Feels like humans have carved out this section of reality and made it their own, these little pieces they can predict, out this whole massive churning universe we find ourselves in.

And this gives abstractions an almost anthropic feel. Living things need abstractions to make sense, need predictive power in order to function. If I couldn’t predict anything about my environment, had no idea that the smell of roast would lead me to the roast itself, I’d just be floundering around helplessly. Maybe I would run into the roast eventually, on happenstance, but if not all that many roasts existed in the world I’d earn a Darwin award pretty quickly. All organisms have some amount of prediction, I think. Even extremely basic ones like E. Coli predict that there will probably be more chemicals if there are some here now, implicitly encoded via the strategy of following the gradient.

And predictions run on abstractions. You don’t need prediction in the Laplacian universe, the one devoid of ifs. Prediction is only necessary for minds: things which cannot fit the entire universe into their heads. It is a map-property.

So this idea, that most things are computationally irreducible, means that the abstractions we landed on are special. That life emerged in the little bubble of predictability, the small section where maps are useful, amidst a vast sea of an otherwise unpredictable universe. And it is anthropic in this sense; that we have maps because we could only ever find ourselves in the part of the universe where abstractions are useful.

And I have this sense, that the middle state, the one of high complextropy, that it is somehow more useful than the others. That the globs that emerge after coarse graining are the types of things that can have ripples in the universe, that we can predict, because they are real.

Can Aliens Play Ball?

I’m not sure I’m right. But here is a picture I have in my head. Imagine that there is a ball in front of you, and now imagine some alien creature who does not have this abstraction, who sees only random seeming pixels where you see your ball. Your map gives you the affordance to kick the ball – to move your foot through space – and to cause the great rippling. The amplitudes in the wave equation going wild as the ball roars down the street. Waves. But your alien friend has no such affordance, can do no such thing.

And I have no idea if this great rippling through the wave equation is “actually happening,” I’m not a physicist, but it just seems like what has to be true. Abstractions give you affordances to leverage small actions into big ones. Both within you and without you. Living organisms learned how to commandeer this technique in their own creation – entire organs downstream of single triggers. This incredibly complex cascade. We have it inside of us, but we also have it outside – in the maps we have of the world. A world of affordances, running on abstractions, blind to the universe of unpredictability engulfing everything else.

Abstractions allow for this leverage, allow you to compress many physical states into one, and somehow only move that one around. Somehow you can manipulate the clump of black, in its entirety, as if it were whole, instead of pushing and pulling all of the pixels underneath it. Everything in the microstate follows, but in the realm of maps, you are impinging on a single entity, causing it to sail through the world. Somehow, in some areas abstractions afford themselves; give themselves up as simple triggers in the environment. And living things have figured out how to play in this dreamworld.

Of course all of this relies on our maps, just like everything. Predictability is relative to a map, and so is computational irreducibility, I claim. I’m not actually sure what Wolfram would say about that. I have the sense that he thinks it’s fundamentally true. Maybe that’s so. But I’m not ready to rule out that what we consider to be high entropy, what we think of as computationally irreducible, is some other map's predictive paradise. But for now it doesn’t matter, for now we only need to see how our abstractions are indeed powerful.

Anthropic Affordances

And so this might be what I think complextropy is. A measure of the small pockets in the universe where abstractions give you the most affordances. This sweet spot, engendering the conditions for life. This middle level where abstractions are meaningfully advantageous. Not too simple as to be almost completely useless (there are not all that many affordances in the initial coffee cup configuration), not too random to render it pointless (there are basically zero affordances in the homogenous zone). But this sweet spot, the one with several different shapes, moving around, offering themselves up to whomever's map lets them take advantage – leverage those small actions into waves.

And yes, maybe it’s telling that you can’t actually do all that much with the swirling milk in a coffee cup. It’s hard to leverage those liquid threads. But my guess is that the general thing is true – and that we find swirling coffee mesmerizing because humans are tracking where their abstractions are useful. And there just tends to be more that you can do with high complextropy things. Even though it is hard to leverage those milky eddies, it sure seems like if you could, it would be meaningful in a way that the uniformly brown coffee would not be. Let alone a cup equally separated. There are more affordances at high complextropy, generally speaking.

I suppose I should come up with an example where this fits. It’s hard to think of something that has these three states. Certainly between medium and high entropy it’s straightforward: I can do more with an intact ball, kick it far, make more ripples, than I can with an exploded one, with rubber bits scattered to the wind. I’m not sure what the low entropy counterpart of this example would be – maybe a ball that can only be in one of two sides of a box or something? This somehow feels like I’m mixing metaphors, but the intuition pump is still clear: when things exist in low entropy states, it means there is just less going on. There are fewer microstates, it takes less bits to talk about the situation, and so there are fewer affordances. Even though it’s predictable, it’s boring. Being able to make the ball go from one side to the other makes wavelets instead of waves. It doesn’t offer up the same amount of leverage.

So it turns out that it’s true. I do think there is something special about complextropy, something that is deeply connected to life, to all things with maps. I think complextropy describes the spaces where abstractions, our abstractions, are most useful. Where they offer up the most affordances. And there’s something a little anthropic to that: our maps exist because they can. They make sense in our little pocket of the universe. They meaningfully shape our tiny corner, shape it in waves.

This Information is Just Right

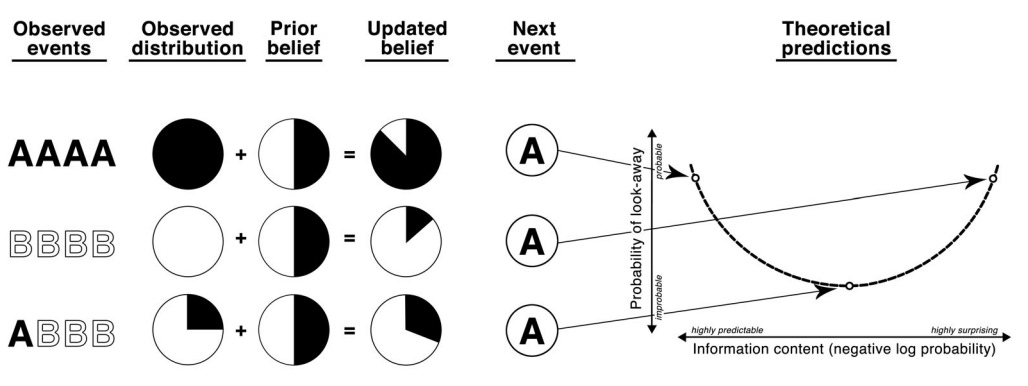

There’s a finding in the curiosity literature that feels related. It’s that people seem to follow what researchers call a Goldilocks curve – a U-shape with respect to information content. I.e., they preferentially attend to intermediate levels of information. This schematic shows the gist of the phenomenon:

Basically, if you’ve seen the sequence AAAA and the next item you see is A, you’re not all that interested, and you’re likely to stop paying attention. If you’ve seen BBBB and then see A, you might also stop paying attention, because there doesn’t seem to be a very discernible pattern there, i.e., it’s not very useful. But in the middle, with ABBB, an A next is more informationally meaningful, at least that’s what these scientists claim. They think that people are preferentially curious about events which lie in this Goldilocks zone of complexity.

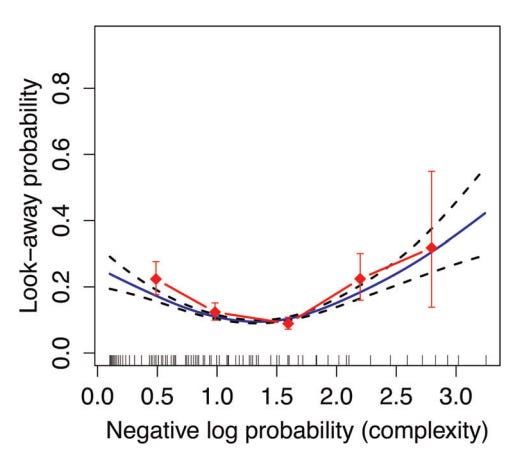

The data seems to match up (I have many qualms with how this was done, but in general I agree with the results):

Here negative log probability is basically just how probable the stimulus was relative to what they had already seen (based on an ideal Bayesian reasoner). So, if an outcome is very likely (low negative log probability) or if it is very unlikely (high negative log probability), infants tend to be less interested. But there’s a sweet spot of intermediate complexity which they find more salient.

It seems like a bit of stretch to call negative log probability complexity. I think a better term would have been surprisal for this particular study, since the experiment consisted of viewing a bunch of boxes which either reliably or unreliably revealed objects like balls and rattles. In other words, the stimuli themselves weren’t “complex,” but they could be more or less surprising based on what you had seen before.

Regardless, it feels interesting and related. Related because of the sweet spot – something about the usefulness of the information at that level.

I claim that the true measure here, the real y axis, is Sophistication. I claim that A isn’t all that exciting after seeing AAAA because the Sophistication of AAAAA is low. Similarly, the Sophistication of BBBBA is lower than ABBBA. And I claim that this is related to why people find ABBBA to be a more interesting sequence. Because at the abstracted layer, the one of programs creating sets of strings, ABBBA is harder to compress.

What’s Between a Dark Cave and a Static TV?

There’s a question I haven’t addressed head on yet. Why is high complextropy less compressible? Naively you’d think we’d want our abstractions to be the most compressed, i.e., the ones which confer the most predictive benefit. But this is the age-old question, the one that poses difficulty for purely predictive processing accounts of cognition: why don’t we just gravitate to dark caves where we can predict everything perfectly? And on the flip side, if we’re curious at all, why aren’t we enthralled by static TV screens, these uniformly random events that we have no predictive advantage over? Andy Clark’s answer from Surfing Uncertainty was hyperpriors instilled by evolution – we have some sense that we should be in the middle of these two things. But why? I think the answer has to do with complextropy and the way it interacts with what abstractions are useful.

Because there’s this other side to compressibility – if it’s fully compressible it’s also pretty boring. And boring means what, exactly? It means something like “we can’t do much with it.” It’s like all the handles go missing, in low complextropy states. When the abstracted coffee cup is all black and white or all uniform, there’s just not that much you can do in those worlds. When we reach heat death there aren’t all that many levers you can pull. And when it’s all in one side of the box, there’s only “one lever” afforded to you at an abstracted level.

So compressibility of the abstract is picking up on how many levers one has, I claim. At low and high entropy there are very few and in the abstracted middle, with all of those different clusters, you have more, you can do more in the system.

This isn’t true in the non-abstracted world. In the world of pixels, or fields or atoms, acting on one of them doesn’t usually do much. Well, acting on them at all is an incorrect frame. In the world of atoms, in the universe without lines, “I” don’t really exist, and “I” don’t act on anything at all, everything moves in lockstep. But if we do draw those lines, if we do look at the coffee coarse grained, then these levers begin to appear.

I still haven’t bridged this gap, but I claim that there is something special about abstractness, especially the maps that we gravitate around – that those abstractions do more in the world, somehow. They certainly do more relative to our maps, (kicking a ball does more than kicking some scattered rubber), but I also think they somehow do more in the world itself. And I claim that complextropy is the measure of where on the continuum our abstractions hold the most weight, where they are most useful – not just predictively (although this is important too), but to actually make waves in the universe itself.